Making the numbers count

Service providers are generating and collecting more data than ever before, and analysis of this data has become a standard feature of many evaluations. While these data sets are an important source of information for evaluation, they are not always in the most appropriate format. When evaluation teams and evaluation commissioners are not sufficiently prepared for administrative data analysis, evaluation time is lost and the quality of insights that could be gained about participants, their profiles, program engagement and outcomes is reduced. Being prepared for administrative data analysis is critical, especially if there are tight timeframes or deadlines in place.

To help evaluators and evaluation commissioners better prepare for the administrative data component of evaluation, we presented the following six tips at the 2019 Australian Evaluation Conference in Sydney. They are designed to help you understand the strengths and weaknesses of the data available and enable efficient evaluation processes and data sharing.

- Define what success looks like: How success is defined will influence the methods (data sources) and analysis used to measure it. Defining your expected outcomes will help frame the evaluation. Program logics can help you do this.

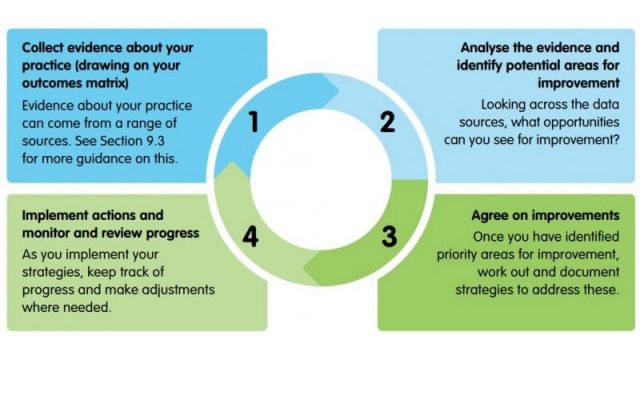

- Know whether you collect outcomes data: Once you have defined success and outcomes, check whether you have any data measures available to capture outcomes. These enable the evaluation to readily assess whether your expected outcomes were achieved. If you don’t have any measures in place, think about proxies. Outcomes matrices are a useful tool for mapping your data collection tools.

- Befriend your data custodian: It’s important to make your data custodian your friend, ideally in the evaluation commission phase. Know who they are, the data they have available, and how to work with them. They can tell you how long it will take to get the data and any considerations related to permissions, privacy and security, all of which will impact on your evaluation timeframes and ethics. Useful questions to ask your data custodian might be, ‘What is the unit of data collection? How often does data entry occur and how is it verified? Who needs to sign off on the data shared to evaluators? Do clients consent to this data being released? Is the data deidentified?’

- Develop a data dictionary: Knowing exactly what variables are collected, how the data is captured, and the available response options will put your evaluation in good stead. It can prevent the evaluation from creating tools like retrospective pre-post surveys where a good data system could answer those questions in a more reliable way. Your data custodian may have specifications or documentation on your data. If there isn’t an existing data dictionary available, statistical programs can also show you the variables and response options in a database.

- Understand the limitations: There are a range of factors that impact on data quality, from unreliable variables and response categories to inconsistent data entry and poor data verification. Understanding these, as well as your counting rules, will ensure we count and report on the data as accurately as possibly. Again, your data custodian should be able to help you with this.

- Allow enough time for data entry and maturity: Like all good things, administrative data takes time to mature. Frontline staff may update their online files at the end of each month (not continuously) and if they work long shifts or have time off then this may not happen until the following month. Then files may then need a verification and sign-off process by a supervisor. Paper-based surveys are often entered even less frequently. We find that a six-week maturation process before data extraction is a minimum, and three months is better. This should be factored into program and evaluation design.

In conclusion, prepare early by defining program success—for whom is it successful and for who it is not, and why—and how to measure it. Identify, know and involve your data custodians to the extent that you can in these processes. By building a bridge between the evaluators and the data custodians, your evaluation will run more smoothly and will unlock the potential of your administrative data to measure success.