Using developmental evaluation to support inclusion

Increasingly, we’re working with non-government organisations to develop and refine initiatives that that support people with disability and increase community inclusion.

At the start, it isn’t always clear what these initiatives will look like, how they’ll be delivered, or even what outcomes they’ll aim to achieve. As with most community development, they take a co-design approach to develop activities and outcomes iteratively, in response to the needs and interests of those involved.

In this context, a traditional approach to evaluation—in which criteria for success, a logic or a theory of change are defined at the outset and a judgement against pre-determined criteria is made at the end—isn’t appropriate. So how can evaluation help? Enter developmental evaluation.

What is developmental evaluation?

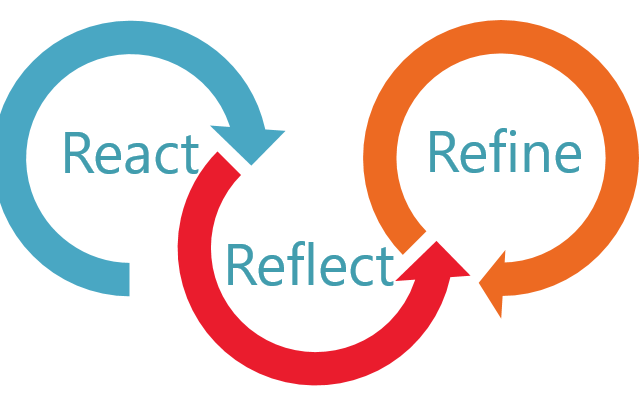

Developmental evaluation, established by Michael Quinn Patton, can support the development of social innovations in changing and complex contexts – where there are interdependencies and no central control. Evaluators work with program teams to review data in real-time and reflect on what it means for them.

We’ve found the following questions support the ‘reflection-development’ loop:

- What? What does the data tell us? What is changing? What is remaining the same? What are the cues there to emerging patterns?

- So what? What is the value of what we are doing? What do these findings mean to us now and into the future? What effects are current changes likely to have on us, program participants and broader communities?

- Now what? What does this mean for how we should act to optimise opportunities now? What are our options?[1]

To support the iterative process, developmental evaluators work in partnership with program teams, communicating regularly and being flexible about data collection methods to respond to emerging needs.

What does it look like in practice?

ARTD is working with Dementia Australia and their Dementia Advisory Group on a developmental evaluation of its Dementia Friendly Communities program. The program, funded by the Department of Health, aims to support communities across Australia to become more dementia-friendly.

It was clear from the start that there were different understandings about what makes a community ‘Dementia Friendly’ and what the priorities were for the program. So, we worked with Dementia Australia to source and understand input from people with dementia and their families, professionals and community members to inform the project design and activities. Three interrelated program components have been iteratively developed:

- The Hub—an online resource centre for information about becoming dementia friendly, resources to empower people with dementia as advocates, and connecting people within and across locations.

- The training program—face-to-face and online education session to inform individuals, businesses and organisations on being dementia friendly.

- Community engagement program grants—funding for 21 organisations to undertake dementia friendly initiatives in their community.

The initial request was to provide six-monthly progress reports – drawing on user surveys and administrative data about the Hub. However, we found that this wasn’t frequent enough in the early stages of rollout, so we looked at data more regularly to understand patterns of uptake and interaction with the Hub, as well as drop off points to inform ongoing promotion and rollout.

The community engagement program attracted far more applications than originally expected, so our team became involved in refining the assessment process.

Talking to the teams, ARTD and Dementia Australia also realised that the stories coming out of the community engagement projects could be used not only for the evaluation, but to support broader public engagement with the program – by helping other communities understand what ‘Dementia Friendly’ might mean in practice. So, we updated our case study approach to include the production of videos that could capture stories of change over a 12-month period. This approach capitalised on the evaluation data collection process to support project implementation.

Our visits to five communities for the video production proved extremely valuable to project teams. They found that the reflective interview process prompted them to think critically about their goals, how they planned to meet these, what would be feasible and sustainable, and what data they should collect from the outset. During the visits, we also noticed that communities were facing some common challenges in designing and delivering their projects, and that others had found ways to overcome these. So, we hosted a webinar for all of the project teams to share their learnings and top tips, and will synthesise and circulate the findings to all.

What have we learned?

In a developmental evaluation, you become part of the team rather than an independent assessor. This doesn’t mean that you don’t offer a critical eye, but that you’re involved in working out how to apply your evaluation findings. Trusting relationships and open communication are crucial to joint critical reflection and the development of creative solutions.

While it’s important to have a plan, developmental evaluation requires flexibility – checking in regularly and evolving your data collection methods to focus on what matters most. This is a balancing act and involves regular communication and project rescoping to manage the overall budget.

Developmental evaluation can be more complicated to manage than a traditional evaluation approach, but it’s extremely rewarding to see evaluation used in real time to inform the ongoing development of an initiative so that it best meets the needs of those involved.

If you’re involved in community development for inclusion, you might find these developmental evaluation resources by Better evaluation useful.

[1] Adapted from Gamble, 2008, A Developmental Evaluation Primer and McKegg and Wehipeihana, Developmental Evaluation: A Practitioner’s Introduction.