Enhancing Evaluative Thinking and Reasoning

We like to focus on doing evaluations that are useful and used. One of the ways to improve evaluation utility is to make feasible, pragmatic recommendations. But how do we get to feasible recommendations? How can we ensure they’re widely acceptable to evaluation stakeholders?

In short, we need to enhance our evaluative thinking.

Recently we attended the AES workshop on Enhancing Evaluative Thinking and Reasoning presented by Anne Markiewicz to do just that.

Lines of inquiry: Deductive and inductive reasoning

Evaluative thinking uses both deductive and inductive reasoning. Deductive reasoning is identifying criteria of merit and standards, measuring performance against standards and synthesising data. Inductive reasoning renders judgement based on evidence and application of values, beliefs and expectation to data. As evaluators, using both styles delivers the benefit of being able to test and develop theories.

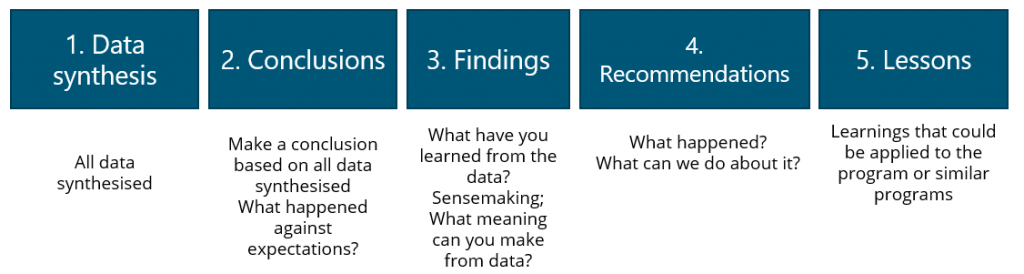

Anne provided a framework for evaluative thinking and reasoning, outlined in Figure 1, which helps to guide the process of answering key evaluation questions. The framework can be used internally, but also to show clients during the discussion of early findings, to bring them along on the evaluation journey.

FIGURE 1. FRAMEWORK FOR EVALUATIVE THINKING [1]

It goes without saying that answering evaluation questions requires good data. To answer a set of key evaluation questions, it is important we understand what data we have (or needs to be collected), and what specific evaluation questions our data relates to. If the data we have doesn’t sufficiently relate to our key evaluation questions, we may need to consider changing our data collection methods or exploring additional collection methods.

The workshop was a good opportunity to discuss different ways that we can collect, analyse and synthesise data to identify findings so we can make recommendations that are feasible.

Negotiating unexpected findings

In part two, we considered how we can ensure evaluations are independent: unbiased, objective assessments. Evaluation work is most often challenged when an evaluation’s findings or recommendations are considered negative, politically sensitive, or are not what a client might be expecting. In small groups we discussed ways to anticipate or prevent this.

Good communication across the project life is critically important, particularly when it comes to sharing findings. It’s even more important if what we’re finding could be viewed as negative. Actively discussing findings gives our clients an opportunity to clarify any points of uncertainty (or provide us with additional data). Some clients might want to make in-flight adjustments to program delivery, so these check-in points can provide opportunities to use initial feedback and findings to make improvements to a program or policy before the evaluation is completed.

Enhance your thinking: phone a friend (or a Reference Group)

For some evaluations, independent evaluation reference groups or oversight committees can be helpful in contributing to evaluative thinking and sharing useful insights at each step of the framework.

At ARTD, we have benefitted greatly from the feedback and knowledge of our Aboriginal Reference Group as part of our evaluation of the Future Directions for Social Housing in NSW program implemented by the Department of Communities and Justice. For example, members of this group have advised, and helped us decide on: our five research locations; the stakeholders we should seek to engage; how we engage with local government and Aboriginal community members and stakeholders and other important local considerations.

We look forward to utilising some of the approaches in our upcoming evaluation work and participating in future AES workshops!

[1] Anne Markiewicz, Enhancing Evaluative Thinking and Reasoning, AES Workshop, 12 and 19 October 2020,

https://www.youtube.com/watch?v=TmC5LvfOTfM